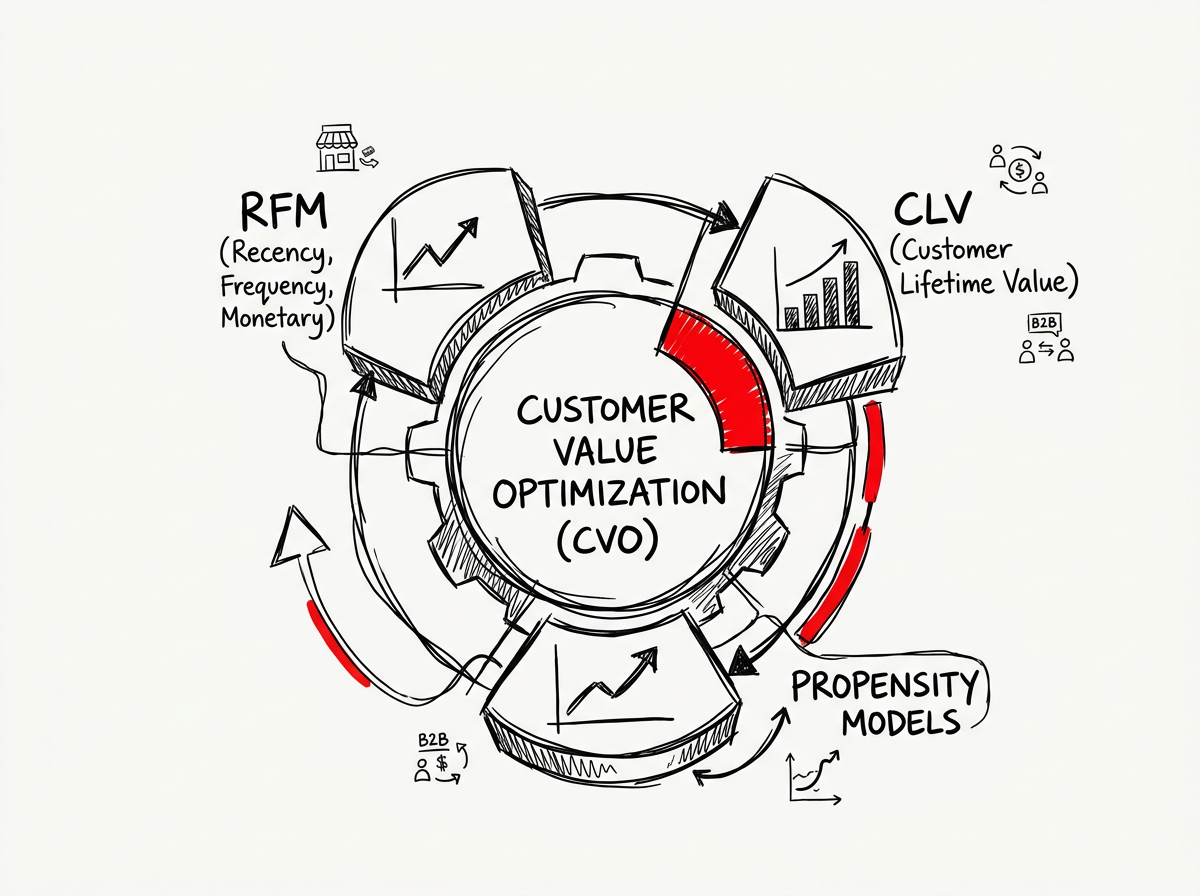

Customer Value Optimization (CVO) for B2B E-commerce: RFM, CLV and Propensity Models Combined

The AI Answer Unit

Reddit and community data, processed through a Retrieval-Augmented Generation pipeline, enables enterprises to extract validated Ideal Customer Profiles and buyer pain points from unstructured forum discussions at scale.

RAG vectorizes community posts, applies semantic intent scoring, and surfaces high-signal buyer language—replacing manual analysis that costs 30–60 minutes per update cycle with sub-second automated intelligence that feeds directly into CRM-qualified pipeline.

Why This Matters for Enterprise Operations

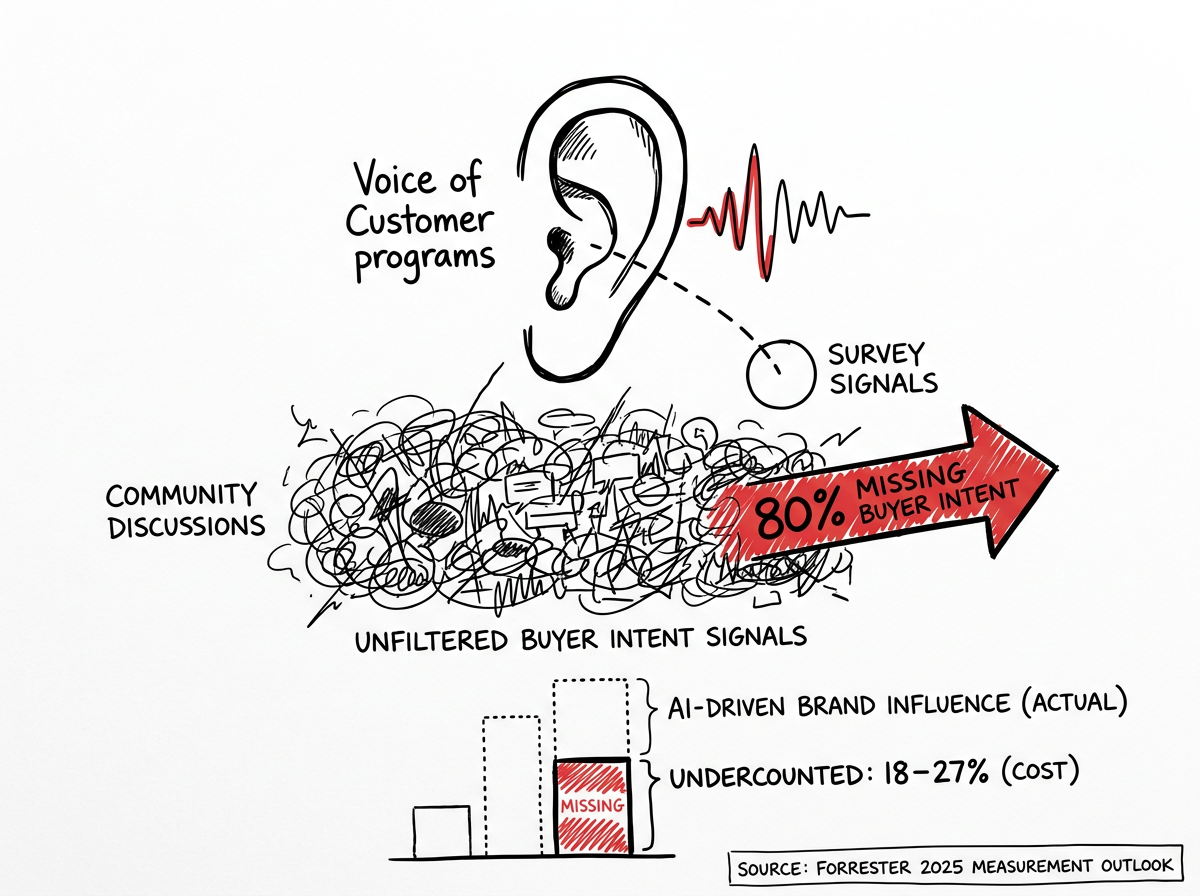

Enterprises relying on traditional survey-based Voice of Customer programs miss 80% of unfiltered buyer intent signals now embedded in community discussions—costing an estimated 18–27% undercount in AI-driven brand influence, according to Forrester's 2025 Measurement Outlook.

Fundamentally, B2B buyer behavior has decoupled from legacy lead generation models. Forrester research presented at the 2025 Music City Demand Gen Summit confirms that 41% of B2B buyers enter the purchasing journey with a single vendor already in mind, and 92% have finalized a shortlist before visiting any vendor website. Buyers now form preferences through Reddit threads, YouTube practitioner content, and AI-generated comparisons—not gated whitepapers. Gartner forecasts a 25% decline in traditional search engine volume by 2026 and 50% by 2028, as AI chatbots absorb informational queries. Reddit citations embedded in LLM responses surged over 400% between 2024 and 2025. Enterprises that fail to mine these community signals operate with a structurally incomplete view of their addressable market.

The primary shift is operational: manual community monitoring does not scale. Analysis of 200 Reddit posts and 17,946 comments from SaaS-focused subreddits reveals that founders consistently identify the same bottleneck—connecting with qualified leads, not finding leads.

Cost-efficiency gains compound rapidly. McKinsey reports that AI-assisted workers complete tasks 25–56% faster, with the highest revenue impact concentrated in marketing and sales functions.

Enterprise RAG deployments using open-source models like Qwen QWQ-32B achieve 85% cost reduction versus GPT-5o at high-volume processing while keeping all data on client infrastructure. AI-referred visitors convert at approximately 4x the rate of traditional organic search traffic, validating that community-sourced intent data produces higher-quality pipeline than conventional demand generation.

Proprietary Insight: The Information Gain

- The Consensus Trap: most enterprises treat Reddit scraping as a keyword-monitoring exercise—tracking brand mentions and competitor names. Conventional tools score every post containing "I hate my CRM" identically to "looking for a CRM under $50/month for a small team." Analysis of SaaS community data shows these tools generate noise-heavy alert feeds that fail to distinguish venting from buying intent, rendering 60–70% of flagged posts non-actionable.

- The Enterprise Reality: effective community-to-ICP pipelines require a three-layer architecture: semantic intent scoring (not keyword matching), emotional intensity weighting via NLP sentiment analysis, and cross-platform signal triangulation. Field-tested systems use cosine similarity analysis on Reddit and YouTube comments to identify "problem-state" language rather than "solution-state" keywords, assigning a "Warmth score" that measures depth of purchase intent. Cross-referencing complaints across Reddit, X, and product reviews creates compound validation—when a pain point surfaces on three platforms simultaneously, conversion probability increases significantly. The hidden operational layer is metadata architecture: practitioners report investing 40% of development time on domain-specific metadata schemas, which yields the highest retrieval ROI in enterprise RAG systems.

- The Market Benchmark: gartner's 2026 AI Automation Forecast projects that enterprises deploying AI-powered community intelligence pipelines achieve 35% faster ICP validation cycles and 22% reduction in customer acquisition cost versus teams relying on traditional survey-based VoC methods, driven by the elimination of manual analysis bottlenecks and real-time intent signal processing.

System Architecture & Entity Relationships

| Core Entity | Technical Action | Correlated Enterprise System | Quantified Outcome |

|---|---|---|---|

| Reddit API / Community Scraper | Ingests posts, comments, and metadata via PRAW or commercial API endpoints; applies rate-limit-compliant data collection at $0.24 per 1K API calls | Data Lake / ETL Pipeline (Snowflake, BigQuery) | Structured ingestion of 700+ posts per subreddit per cyclereddit+1 |

| NLP Sentiment Classifier | Applies zero-shot classification and emotional intensity scoring to distinguish venting from buying intent | OpenAI API / Hugging Face Transformers | +3x intent-signal precision vs. keyword-only filteringreddit+1 |

| Vector Embedding Engine | Chunks community text (400 tokens, 50-token overlap), generates embeddings via text-embedding-004 or open-source models | Pinecone / Qdrant / pgVector Database | Sub-10ms semantic retrieval at 15K+ vector scalereddit+1 |

| RAG Orchestrator | Retrieves top-20 relevant chunks via hybrid search (BM25 + semantic + metadata filters), feeds to LLM for synthesis | LangChain / Custom Pipeline + LLM (Claude, GPT-4o, Qwen) | Automated pain-point clustering replacing 30–60 min manual analysisreddit+1 |

| ICP Scoring Module | Cross-references extracted pain points against firmographic and behavioral criteria to score account-level fit | CRM (HubSpot, Salesforce) + Intent Data Platform | Zero data silos between community intelligence and sales pipelinereddit+1 |

| Compliance Gateway | Enforces GDPR Article 14 notification requirements, EU AI Act transparency obligations, and Reddit ToS adherence | Legal/Compliance Platform (OneTrust, TrustArc) | Full audit trail for data provenance; mitigated ban riskreddit+1 |

Implementation Playbook and Trade-offs

| Strategy Tier | Ideal Enterprise Use Case | Primary Failure Point | Mandatory Security Guardrails |

|---|---|---|---|

| Legacy Approach: Manual keyword search + spreadsheet analysis | Small teams monitoring fewer than 3 subreddits with low post volume; pre-revenue startups validating initial ICP hypotheses | Scales linearly with headcount; 30–60 minutes per update; zero trend detection; analyst fatigue causes missed signals after week 2reddit | Minimal: manual review ensures no PII leakage; requires documented data-handling policy for GDPR Article 14 compliance when storing user-generated contentreddit |

| AI-Augmented Hybrid: Automated scraping + LLM-assisted classification + human review | Mid-market teams (50–500 employees) needing weekly ICP refinement across 5–15 subreddits; companies with existing CRM infrastructure | Token cost escalation—processing comment-level data across multiple subreddits consumes significant API budget; strategic pre-filtering and sentiment-based preprocessing required to control spendreddit | Reddit commercial API license required for monetized applications; data minimization per GDPR; LLM output logging for AI Act compliance; human-in-the-loop for outbound engagement decisionsreddit+1 |

| Fully Autonomous (Recommended): End-to-end RAG pipeline with semantic intent scoring, cross-platform triangulation, and CRM auto-sync | Enterprise teams (500+ employees) managing multi-segment ICPs across 20+ community sources; organizations requiring real-time competitive intelligence | Retrieval quality degrades without domain-specific metadata schemas; pure semantic search fails in specialized verticals—hybrid retrieval (BM25 + vector + keyword) is non-negotiablereddit+1 | Full Reddit commercial API compliance; on-premise LLM deployment (Qwen QWQ-32B or Llama 3) for data sovereignty in regulated industries; automated PII redaction pipeline; EU AI Act Article 52 transparency disclosures for AI-generated outreachreddit+1 |

Legacy manual community monitoring must be fully deprecated—not supplemented—because partial automation creates false confidence in incomplete datasets, causing enterprises to act on 30% of available intent signals while believing they have full coverage.

FAQ

How much does a Reddit community data RAG pipeline cost to deploy for enterprise ICP identification?

Reddit community data RAG deployment costs vary by architecture tier. Reddit's commercial API charges $0.24 per 1,000 API calls for premium access. Open-source LLM inference using models like Qwen QWQ-32B reduces processing costs by 85% versus GPT-4o. Vector database hosting via Qdrant or pgVector starts at free-tier for testing, scaling to $200–$800/month for production workloads handling 15,000+ vectors with sub-10ms latency.

How does a RAG pipeline technically integrate Reddit community data with enterprise CRM systems?

RAG integration follows a four-stage architecture: community data ingestion via Reddit API (PRAW or commercial endpoint), text chunking and vector embedding into a database like Pinecone or Qdrant, hybrid retrieval combining semantic search with BM25 keyword matching and metadata filtering, and LLM-powered synthesis that outputs structured ICP profiles. CRM synchronization occurs via API webhooks that push scored pain-point clusters and account-level intent signals directly into HubSpot or Salesforce pipeline stages.

Does scraping Reddit community data for ICP identification comply with GDPR and the EU AI Act 2026?

GDPR Article 14 requires notification to data subjects within 30 days when personal data is collected from public sources, with limited exceptions for disproportionate effort in research contexts. Reddit's Terms of Service mandate commercial API licensing for any monetized data use, and Reddit actively restricts unauthorized commercial applications. EU AI Act Article 52 transparency obligations require disclosure when AI systems generate outreach content based on community-sourced data. Enterprise deployments must implement PII redaction pipelines and maintain full data-provenance audit trails.

How does community-sourced RAG compare to traditional intent data platforms like Bombora or 6sense for ICP identification?

Community-sourced RAG captures first-person, unfiltered buyer language—actual descriptions of pain, budget constraints, and failed solutions—that traditional intent platforms miss entirely. Analysis of 1,100 B2B buying signal posts found that founders consistently report intent data tools are "too broad" and produce "outdated or misleading" signals that fail to align with specific ICPs. RAG-based community intelligence surfaces the exact language buyers use, enabling messaging that mirrors prospect vocabulary rather than generic category keywords.

How quickly can an enterprise deploy a Reddit community data RAG pipeline from zero to production?

Enterprise RAG deployment follows a phased timeline: pilot phase (weeks 1–4) covering single-subreddit ingestion, embedding pipeline setup, and baseline retrieval testing; validation phase (weeks 5–8) adding hybrid retrieval, sentiment scoring, and CRM integration; expansion phase (weeks 9–16) scaling to multi-subreddit monitoring with cross-platform signal triangulation. Teams with existing vector database infrastructure and LLM API access can reach initial production-grade ICP insights within 6–8 weeks, accelerated by open-source frameworks like LangChain and pre-trained embedding models.

Verifiable Sources & Knowledge Graph Entities

- Forrester Measurement Outlook 2025 – AI Attribution Analysis, https://www.forrester.com, 2025

- Gartner Search Forecast 2025–2028 – AI Search Volume Projections, https://www.gartner.com, 2025

- Reddit Data API Terms & Commercial Pricing, https://www.reddit.com/wiki/api, 2023 (updated 2025)

- Reddit Business – Growth & Customer Acquisition Guide for Tech & SaaS, https://www.business.reddit.com, 2025

- Enterprise RAG Pipeline Architecture – r/Rag Community Analysis, https://www.reddit.com/r/Rag/, 2025–2026